A Stage, Not a Product Launch

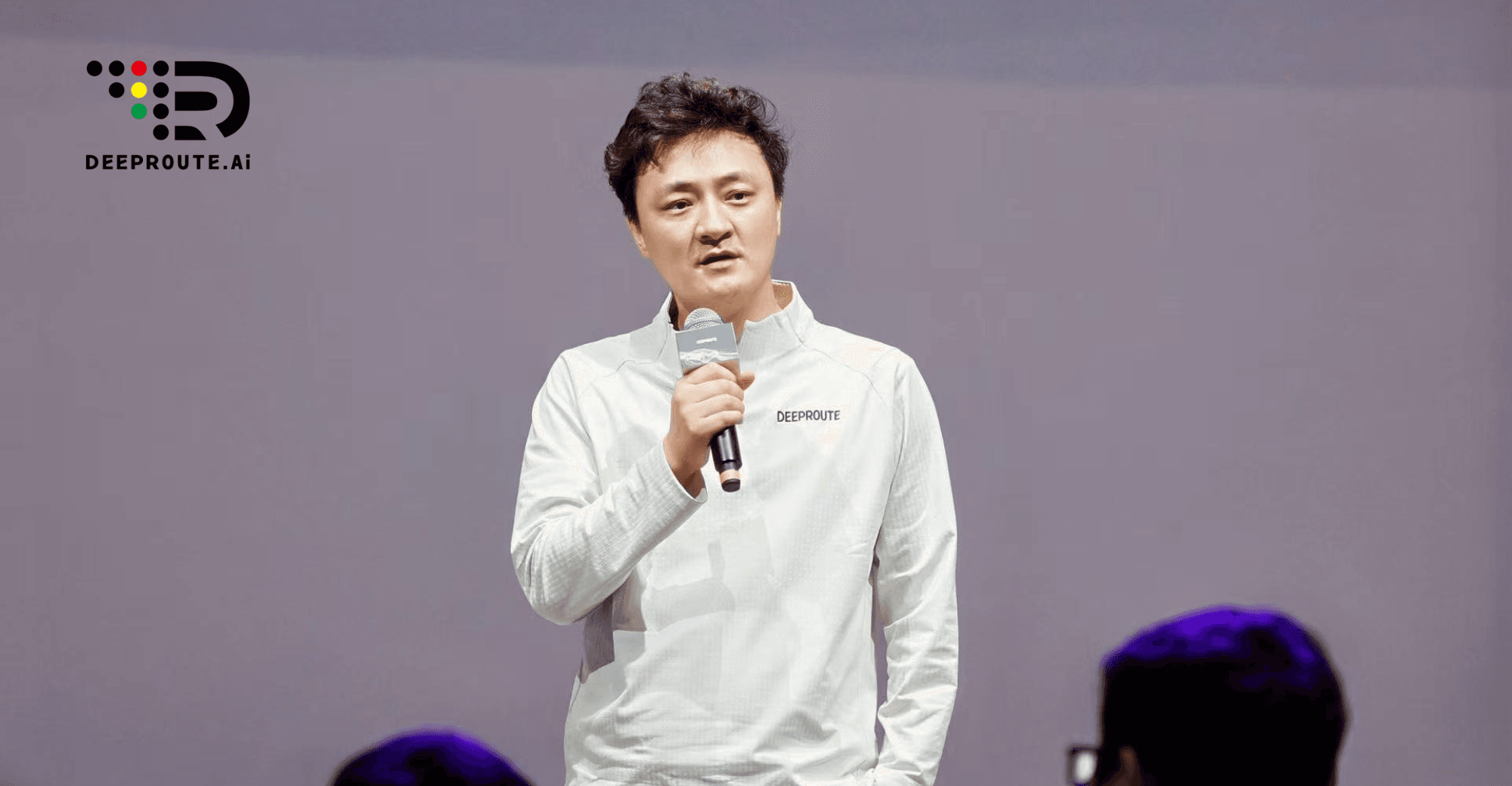

On April 25, 2026, in Hall A4 of the China International Exhibition Center in Beijing, DeepRoute.ai held a press conference that stood apart from the spectacle of concept cars and glossy spec sheets filling the surrounding halls. There was no vehicle on display. By leaving the showroom floor empty of hardware, DeepRoute sent a clear message: they aren’t a carmaker, but the ‘brain’ builder. Instead of a product launch, CEO Maxwell Zhou used the stage to lay out a broader thesis: that the true horizon for autonomous driving isn’t just better cars, but the creation of AI infrastructure for the physical world.

The event was a deliberate full-stack declaration. In the span of one afternoon, DeepRoute unveiled its top-level strategic framing (Physical AI), its core technical architecture (the Foundation Model), a glimpse of new product direction (a cabin-driving integrated Agent), a vision statement (“make peace of mind the norm”), and a market position: one in every three new urban NOA-equipped vehicles in China now runs on DeepRoute’s system — over 300,000 cars on the road.

But perhaps the most significant moment came midway through the event, when Chief Scientist Ruan Chong — formerly the head of R&D at DeepSeek and a core researcher in multimodal AI — stepped onto a public stage for the first time since joining the company. His presence, as much as anything he said, sent a signal that the industry is reading carefully.

From Better Supplier to Infrastructure Builder

The autonomous driving industry has long competed on feature benchmarks: which system handles rain better, which detects coner cases faster. DeepRoute’s framing at this year’s Beijing Auto Show broke from that paradigm entirely. Maxwell Zhou articulated a vision not of a better ADAS supplier, but of a company building the foundational AI layer for the physical world, “like electricity or telecommunications,” as he put it, “infrastructure that supports how the real world runs.”

This is a meaningful conceptual shift. “Software-defined vehicle” framing, which dominated the industry’s self-description for the better part of a decade, ultimately remained product-centric: software as the differentiator within a vehicle context. “Physical AI infrastructure” is capability-centric — it points to a layer of AI competence that can be deployed across a wide range of embodied agents, beginning with autonomous vehicles but explicitly not ending there.

Maxwell Zhou was direct in his reasoning: “What I care about most is safety. Ninety percent of what matters is safety — everything else is ten points.” The aspiration for 1,000+ kilometer MPCI (Miles Per Critical Intervention) by the end of 2026 is the concrete expression of that belief. “Tesla has already done this,” he said during the media roundtable. “If someone else can do it, we can do it too.”

The underlying argument is that this level of reliability is simply not achievable via the small-model paradigm. “Whatever you do in the small-model world, you cannot get ten times better by working harder,” Zhou said. “That’s not the path.”

The Signal in the Talent Migration

When Ruan Chong left DeepSeek to join DeepRoute as Chief Scientist, the industry took notice. His public debut at Beijing Auto Show made that bet official.

In the media roundtable, Ruan was characteristically direct about his reasoning. “I don’t like working on things with diminishing marginal returns,” he said. “Language models are very mature — almost any task can be handled by one model. But in multimodal and embodied intelligence, we’re nowhere near that stage. I’d rather be part of a frontier than a mature field.” He also cited a sense of mission: “If my presence or absence makes no difference, why am I doing it?”

This isn’t an isolated move. Maxwell Zhou framed it as a broader trend: “Today, the heads of multimodal research at major internet companies are coming into autonomous driving. That’s because once multimodal has a breakthrough, you can do precise experimental prediction — and then it generates impact in the physical world.” Gemini’s advances in early 2026, he argued, represented a capability inflection that made the physical-AI bet credible in a way it wasn’t in 2024 or 2025.

This talent migration matters beyond optics. In an industry where R&D approach determines everything, the arrival of researchers who built frontier LLM systems changes what kinds of problems a company can even attempt to solve.

The Architecture: One Model to Drive, Understand, and Judge

Ruan Chong’s keynote was titled “Being AI-Native in the Post-LLM Era” — a deliberate framing that locates DeepRoute’s technical approach within the broader trajectory of AI development, rather than within the narrower history of ADAS.

The core argument: traditional autonomous driving systems are built around many small, specialized models — one for pedestrian detection, one for traffic lights, one for trajectory planning, and so on. This creates what Ruan called “cognitive fragmentation” — brittle hand-offs between models, difficulty integrating new data, and a ceiling on what the overall system can learn.

DeepRoute’s Foundation Model unifies three capabilities under a single architecture:

The Driver Model handles actual driving decisions — taking sensor inputs and outputting actions (steering, braking, acceleration).

The Analyst Model integrates language modality. It can explain why the vehicle is making a given decision in natural language — “approaching an intersection with a blind corner, decelerating to account for potential pedestrian emergence” — while simultaneously handling data annotation across the R&D pipeline.

The Critic Model enables learning from negative data — not just mimicking good driving behavior, but understanding why certain behaviors (running red lights, competing for right-of-way) are bad, and actively avoiding them. “In the small-model era, you could only use positive data,” Ruan explained. “Now we can tell the model what bad looks like, and let it learn to avoid those patterns.”

The practical result of this architecture is a compression of the R&D iteration cycle from approximately five days to twelve hours. That number deserves more attention than it typically gets. It doesn’t just mean faster development — it means a qualitatively different research process: one where the team can run experiments, observe results, and adjust approach on a daily cadence rather than a weekly one. At scale, that compounds into a significant capability advantage.

Ruan was also candid about the deployment challenge. Large models face real constraints on edge hardware. His answer was two-pronged: distillation (using the large model to train a smaller one, yielding a small model far superior to one trained from scratch) and time (“trust the trajectory of hardware — a 50MB model that seemed huge in 2017 looks trivial now; the same will happen at every scale”).

Data as Infrastructure: The Flywheel Logic

DeepRoute’s current market position is not incidental to its technical strategy — it is the strategy. The 300,000+ vehicles on the road running DeepRoute’s system have generated over 1.3 billion kilometers of real-world driving data and 44.8 million hours of active usage in the past year alone.

This isn’t primarily a commercial achievement — it’s a data flywheel. Physical AI systems, unlike language models, cannot be trained primarily on pre-existing internet data. The data has to come from real-world deployment. Every additional vehicle running DeepRoute’s system produces more training signal for the Foundation Model, which improves the system, which makes it more attractive to OEM partners, which expands deployment. The 2026 target of one million vehicles on road is, in this light, not just a sales goal — it’s a model training goal.

Zhou was explicit about the inflection point: “Once you’re above two million vehicles per year, the marginal cost of additional data decreases. At that point, data stops being the bottleneck.” The current 300,000-vehicle position is designed to get DeepRoute to that threshold.

The roadmap is ambitious but specific: 1,000+ km MPCI by end of 2026, user high-frequency activation rate above 50%, and the cabin-driving integrated Agent entering production. These targets are interdependent — higher MPCI builds user trust, higher activation generates more data, more data improves MPCI.

Path Competition: A Global Frame

The Physical AI frame places DeepRoute in a global competition that extends beyond the autonomous driving market. The key technical contenders — Tesla, Waymo, and an increasingly capable Chinese ecosystem — are converging on a similar insight: that the path to reliable autonomous systems runs through unified foundation models trained at scale on real-world data, not through the progressive refinement of specialized small models.

Zhou was respectful but direct about Tesla’s position: “Tesla has already hit 1,000 km MPCI. We don’t know if they can go from 1,000 to 10,000 — that depends on whether their multimodal approach can scale further. But if it can, that’s a USD 4 trillion company. That’s what Musk is betting on.”

The roundtable discussion surfaced a more nuanced debate on technical architecture. Xu Yinghao, formerly of Ant Group’s embodied intelligence team, argued that VLA (Vision-Language-Action) models and world models are not competing approaches but complementary ones: VLA provides the deployment-ready capability, world models provide the data synthesis that makes VLA training possible at scale. Alibaba Cloud’s Huo Jian pointed to reinforcement learning as the paradigm most likely to break the ceiling on both: “The pattern of labeled data driving model improvement is being superseded.”

Where does DeepRoute fit in this picture? Its approach — unified foundation model plus scaled real-world data — is neither pure simulation-dependent nor purely end-to-end in the Tesla sense. The 12-hour data flywheel is designed precisely to make the distinction less important: if you can run high-quality experiments fast enough, the choice of architectural paradigm becomes less determinative than the quality of the iteration process itself. “True leadership isn’t a model at a point in time,” Ruan said. “It’s how you organize the research process to keep improving.”

The Power Structure Question

If Physical AI becomes a genuine infrastructure layer — comparable in significance to mobile connectivity or cloud computing — then the competitive dynamics between three categories of players will be reshaped: technology giants (who own foundation model capabilities), automakers (who own manufacturing scale and real-world deployment channels), and autonomous driving specialists (who own the engineering bridgework between the two).

Maxwell Zhou’s framing at the roundtable was pointed on this: the companies that will define the outcome are not those that capture the largest market share in the near term, but those that build capabilities that cannot be easily replicated. In his view, the Physical AI infrastructure layer will ultimately be owned by whoever does the hard foundational work — the architecture-level research, the data systems, the iteration methodology — not by whoever deploys the most aggressively in the short term. It is a bet on depth over speed, and on compound returns from genuine technical ownership rather than execution against a borrowed blueprint.

DeepRoute’s self-positioning as infrastructure — not product, not feature set — is a deliberate move in this dynamic. Infrastructure companies are not typically winner-take-all markets in the way software products are; they tend toward oligopoly with high switching costs. If that logic holds for Physical AI, a 200,000-vehicles-per-year operation generating unique training data from real-world deployment is a more defensible position than any single model architecture.

The China Advantage — and Its Limits

China’s role in this story is worth examining carefully. The country’s EV market penetration rate, complex urban environments, and regulatory tolerance for large-scale testing have created conditions that are, at minimum, highly favorable for the kind of data-intensive Physical AI development DeepRoute is pursuing. “One in every three new urban NOA vehicles in China runs on our system” is not just a commercial metric — it reflects the density of real-world physical AI deployment that is simply harder to achieve at comparable scale elsewhere.

The “technology-scenario-data-business” rapid iteration loop that Zhou described is arguably more executable in China’s current market than in any other geography. The combination of willingness to deploy partially-capable AI systems at scale, a manufacturing ecosystem that can execute on high-volume OEM agreements, and a talent pipeline shaped by years of foundation model research — including researchers like Ruan Chong who have worked at the frontier of both language and multimodal AI — creates a specific advantage.

The harder question is whether that advantage compounds over time or faces ceiling effects. Physical AI deployment generates unique value from diversity and complexity of scenarios, not just volume. China’s urban environments provide the former. But trust, regulation, and societal willingness to rely on AI systems for safety-critical physical tasks will determine how far the deployment envelope can extend — in China and globally.

What This Moment Means

DeepRoute’s Beijing Auto Show announcement was, in the end, not about any single product or metric. It was about a bet on which kind of AI company matters in the next decade.

The claim that Physical AI represents the next major competitive arena after language AI is not unique to DeepRoute — it’s becoming a consensus view across the industry. What DeepRoute is arguing is that within that arena, the decisive advantage will go to companies that can close the data-model-deployment flywheel fastest, at the highest level of real-world complexity, with the talent capable of operating frontier research methods in a physical context.

Ruan Chong’s arrival, the 12-hour iteration cycle, the 300,000-vehicle data asset, and the 1,000 km MPCI target are all components of the same argument. Whether that argument proves correct — whether DeepRoute can translate a strong market position in Chinese urban NOA into genuine Physical AI infrastructure — will be one of the more consequential questions in global technology over the next few years.

What is already visible is that the framing of competition has changed. The question is no longer which ADAS supplier has the best performance on a benchmark. It’s which company is building the layer of AI capability that the physical world will run on. That’s a different kind of race, and it’s one that Beijing Auto Show 2026 made unmistakably clear has begun.

Source link

Like this:

Like Loading…

Източник https://bccci.net/bg/feed/